Your Community Door

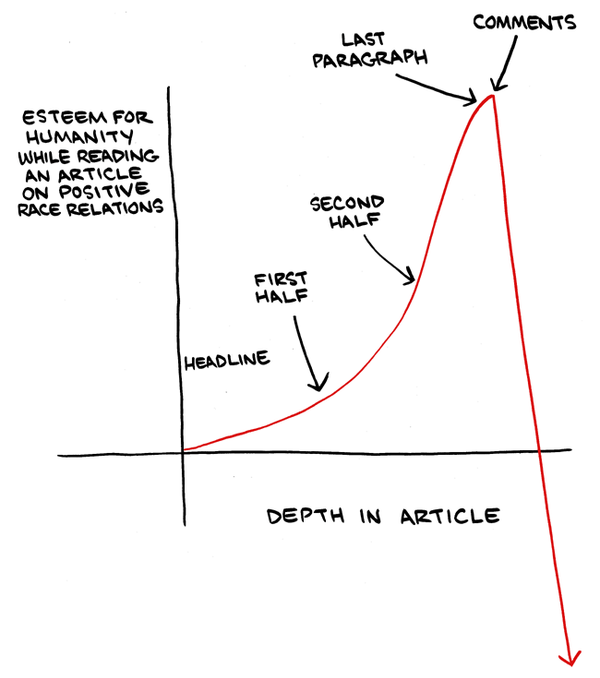

What are the real world consequences to signing up for a Twitter or Facebook account through Tor and spewing hate toward other human beings?

Facebook reviewed the comment I reported and found it doesn’t violate their Community Standards. – Rob Beschizza (@Beschizza) October 15, 2014

As far as I can tell, nothing. There are barely any online consequences, even if the content is reported.

But there should be.

The problem is that Twitter and Facebook aim to be discussion platforms for “everyone,” where every person, no matter how hateful and crazy they may be, gets a turn on the microphone. They get to be heard.

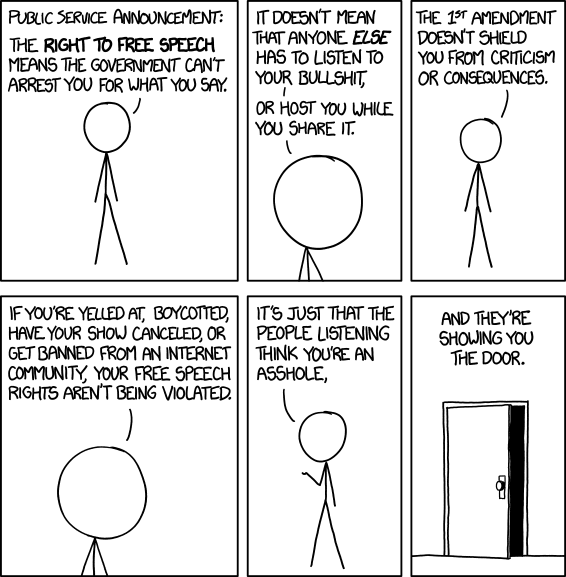

The hover text for this one is so good it deserves escalation:

I can’t remember where I heard this, but someone once said that defending a position by citing free speech is sort of the ultimate concession; you’re saying that the most compelling thing you can say for your position is that it’s not literally illegal to express.

If the discussion platform you’re using aims to be a public platform for the whole world, there are some pretty terrible things people can do and say to other people there with no real consequences, under the noble banner of free speech.

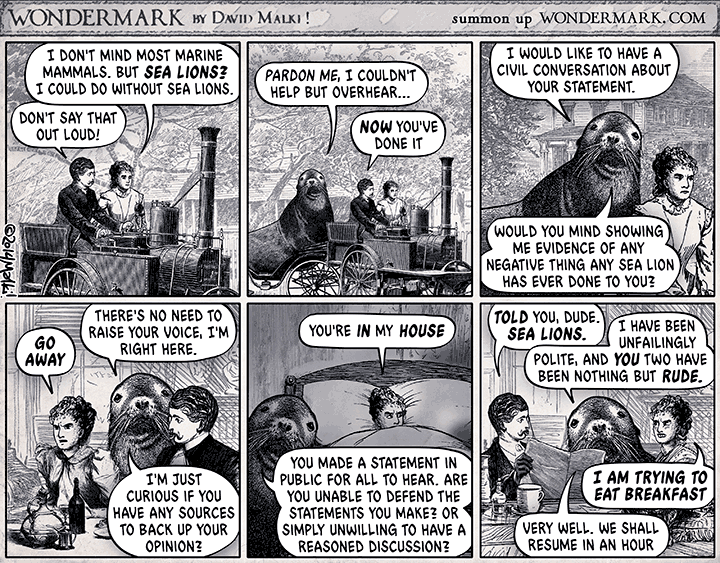

It can be challenging.

How do we show people like this the door? You can block, you can hide, you can mute. But what you can’t do is show them the door, because it’s not your house. It’s Facebook’s house. It’s their door, and the rules say the whole world has to be accommodated within the Facebook community. So mute and block and so forth are the only options available. But they are anemic, barely workable options.

As we build Discourse, I’ve discovered that I am deeply opposed to mute and block functions. I think that’s because the whole concept of Discourse is that it is your house. And mute and ignore, while arguably unavoidable for large worldwide communities, are actively dangerous for smaller communities. Here’s why.

- It allows you to ignore bad behavior. If someone is hateful or harassing, why complain? Just mute. No more problem. Except everyone else still gets to see a person being hateful or harassing to another human being in public. Which means you are now sending a message to all other readers that this is behavior that is OK and accepted in your house.

- It puts the burden on the user. A kind of victim blaming – if someone is rude to you, then “why didn’t you just mute / block them?” The solution is right there in front of you, why didn’t you learn to use the software right? Why don’t you take some responsibility and take action to stop the person abusing you? Every single time it happens, over and over again?

- It does not address the problematic behavior. A mute is invisible to everyone. So the person who is getting muted by 10 other users is getting zero feedback that their behavior is causing problems. It’s also giving zero feedback to moderators that this person should probably get an intervention at the very least, if not outright suspended. It’s so bad that people are building their own crowdsourced block lists for Twitter.

- It causes discussions to break down. Fine, you mute someone, so you “never” see that person’s posts. But then another user you like quotes the muted user in their post, or references their @name, or replies to their post. Do you then suppress just the quoted section? Suppress the @name? Suppress all replies to their posts, too? This leaves big holes in the conversation and presents many hairy technical challenges. Given enough personal mutes and blocks and ignores, all conversation becomes a weird patchwork of partially visible statements.

- This is your house and your rules. This isn’t Twitter or Facebook or some other giant public website with an expectation that “everyone” will be welcome. This is your house, with your rules, and your community. If someone can’t behave themselves to the point that they are consistently rude and obnoxious and unkind to others, you don’t ask the other people in the house to please ignore it – you ask them to leave your house. Engendering some weird expectation of “everyone is allowed here” sends the wrong message. Otherwise your house no longer belongs to you, and that’s a very bad place to be.

I worry that people are learning the wrong lessons from the way Twitter and Facebook poorly handle these situations. Their hands are tied because they aspire to be these global communities where free speech trumps basic human decency and empathy.

The greatest power of online discussion communities, in my experience, is that they don’t aspire to be global. You set up a clubhouse with reasonable rules your community agrees upon, and anyone who can’t abide by those rules needs to be gently shown the door.

Don’t pull this wishy washy non-committal stuff that Twitter and Facebook do. Community rules are only meaningful if they are actively enforced. You need to be willing to say this to people, at times:

No, your behavior is not acceptable in our community; “free speech” doesn’t mean we are obliged to host your content, or listen to you being a jerk to people. This is our house, and our rules.

If they don’t like it, fortunately there’s a whole Internet of other communities out there. They can go try a different house. Or build their own.

The goal isn’t to slam the door in people’s faces – visitors should always be greeted in good faith, with a hearty smile – but simply to acknowledge that in those rare but inevitable cases where good faith breaks down, a well-oiled front door will save your community.