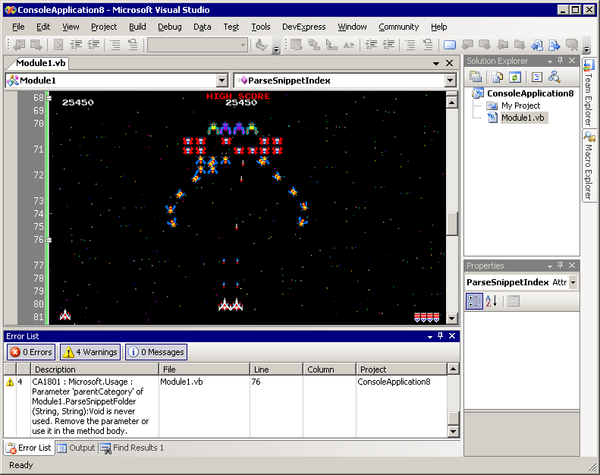

programming languages

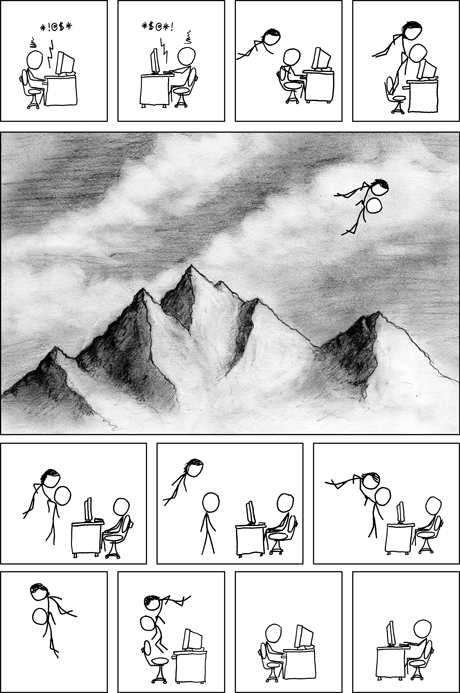

Our Programs Are Fun To Use

These two imaginary guys influenced me heavily as a programmer. Instead of guaranteeing fancy features or compatibility or error free operation, Beagle Bros software promised something else altogether: fun. Playing with the Beagle Bros quirky Apple II floppies in middle school and high school, and the smorgasboard of oddball hobbyist