Microsoft LogParser

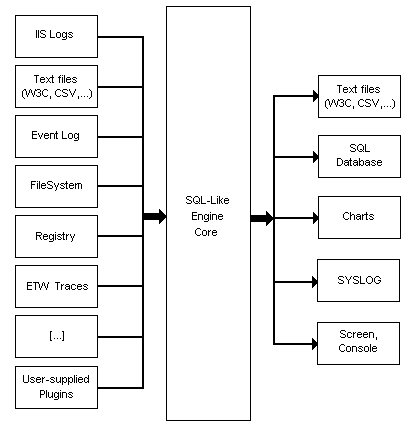

Ask yourself this question: what if everything could be queried with SLQ? Microsoft’s LogParser does just that. It lets you slice and dice a variety of log file types using a common SQL-like syntax. It’s an incredibly powerful concept, and the LogParser implementation doesn’t disappoint. This architecture diagram from the LogParser documentation explains it better than I could:

The excellent forensic IIS log exploration with LogParser article is a good starting point for sample LogParser IIS log queries. Note that I am summarizing just the SQL clauses; I typically output to the console, so the actual, complete command line would be

logparser “(sql clause)” -rtp:-1

Top 10 items retrieved:

SELECT TOP 10 cs-uri-stem as Url, COUNT(cs-uri-stem) AS Hits FROM ex*.log GROUP BY cs-uri-stem ORDER BY Hits DESC

Top 10 slowest items:

SELECT TOP 10 cs-uri-stem AS Url, MIN(time-taken) as [Min], AVG(time-taken) AS [Avg], max(time-taken) AS [Max], count(time-taken) AS Hits FROM ex*.log WHERE time-taken < 120000 GROUP BY Url ORDER BY [Avg] DESC

All Unique Urls retrieved:

SELECT DISTINCT TO_LOWERCASE(cs-uri-stem) AS Url, Count(*) AS Hits FROM ex*.log WHERE sc-status=200 GROUP BY Url ORDER BY Url

HTTP errors per hour:

SELECT date, QUANTIZE(time, 3600) AS Hour, sc-status AS Status, COUNT(*) AS Errors FROM ex*.log WHERE (sc-status >= 400) GROUP BY date, hour, sc-status HAVING (Errors > 25) ORDER BY Errors DESC

HTTP errors ordered by Url and Status:

SELECT cs-uri-stem AS Url, sc-status AS Status, COUNT(*) AS Errors FROM ex*.log WHERE (sc-status >= 400) GROUP BY Url, Status ORDER BY Errors DESC

Win32 error codes by total and page:

SELECT cs-uri-stem AS Url, WIN32_ERROR_DESCRIPTION(sc-win32-status) AS Error, Count(*) AS Total FROM ex*.log WHERE (sc-win32-status > 0) GROUP BY Url, Error ORDER BY Total DESC

HTTP methods (GET, POST, etc.) used per Url:

SELECT cs-uri-stem AS Url, cs-method AS Method, Count(*) AS Total FROM ex*.log WHERE (sc-status < 400 or sc-status >= 500) GROUP BY Url, Method ORDER BY Url, Method

Bytes sent from the server:

SELECT cs-uri-stem AS Url, Count(*) AS Hits, AVG(sc-bytes) AS Avg, Max(sc-bytes) AS Max, Min(sc-bytes) AS Min, Sum(sc-bytes) AS TotalBytes FROM ex*.log GROUP BY cs-uri-stem HAVING (Hits > 100) ORDER BY [Avg] DESC

Bytes sent from the client:

SELECT cs-uri-stem AS Url, Count(*) AS Hits, AVG(cs-bytes) AS Avg, Max(cs-bytes) AS Max, Min(cs-bytes) AS Min, Sum(cs-bytes) AS TotalBytes FROM ex*.log GROUP BY Url HAVING (Hits > 100) ORDER BY [Avg] DESC

There’s an entire book about LogParser, and Mike Gunderloy even started an unofficial LogParser fansite.

Here are a few other articles I found that touch on different aspects of LogParser:

- Graphing PING results

- Generating XML output and Excel 2003 XML pivots

- Auditing the Event Logs

- Querying an RSS Feed

- Professor Windows: How LogParser Works

Although LogParser is 96.44% awesome, there are a few things that I didn’t like about it:

- I really, really need a standard deviation function. Min, Max, and Avg are nice but totally inadequate for determining how variable something is.

- The graphing output is cool – but it’s also a MS Office dependency. If you try to graph something on a machine without Office installed, you’ll get an error.

- The automatic detection of column types in CSV files isn’t always reliable. This meant I couldn’t graph some numeric values in my PerfMon dumps because LogParser decided they were strings. I couldn’t find any way to force a column to be detected as a certain type, either.

Of course, the idea of SQL being used to query a bunch of stuff isn’t exactly a new one; Microsoft’s WQL (WMI Query Language) is similar but more annoying and less powerful. And you’ll get tons of hits if you logically extend this concept to querying HTML, too. Just try searching Google for Web Query Language.